AI Is a Lossy Knowledge Format

Sometimes That’s Ok

FlightPath Server’s product launch is next week. It will take its place next to the FlightPath Data frontend as a key link in the premier data preboarding solution.

At the end of the last FlightPath launch, as the dust settled, I looked at some of the other folks on ProductLaunch. Two of them jumped out at me for combining AI and data extraction. Both tools took unstructured or semi-structured data and ran it through LLMs to generate validated data. That hit close to home. Here’s why.

Some years ago I led the product management function for a company that collected unstructured data, processed it, and sold data feeds. To spare all concerned (innocent, guilty, and bystanders) I’ll say we dealt in classified ads data. Remember classified ads? I don’t, but I hear they were cool.

We made parsers that took raw ads and turned them into structured data in an internal XML format. The XML files were aggregated in document and relational databases and ultimately sold as CSV files or through APIs and other software. It was a good business. We were considered the best at it.

The teams creating and operating these products were about 300 strong at their peak, give or take. They used NLP, semantic search, rules-based expert system ontologies and logic, and deep learning. We sold our products to Google and lived in fear of Google creating a competing product and kicking us to the curb. Ultimately that happened, but not the way I’d expected.

In 2017, as everyone knows, Google decreed that attention is all you need. Within four years, the world was on fire with AI. I’d moved on from the classified ads to another vertical search and data company with a similar NLP-heavy tech stack, but in a different domain. Eventually I had to do the compare and contrast of the layered NLP->search->deep learning I’d been doing vs. new LLM and RAG ways of doing the same thing.

I started with just getting structured data from a classified ad using one of the new LLM APIs. I forget which, but for the record, I love Claude. :) Creating a test harness to use the LLM API to process a file took only about 20 minutes from a cold start.

In about 30 minutes I stopped. I had convinced myself that I had just created a better classified ad parser than a team of 40 people had done over about a decade. I was floored!

Back to the present time. Product launches. So, then. I saw these two products on Product Hunt that were in the CSV data extraction space. And FlightPath, my own new launch, being in an adjacent data management place, they caught my eye.

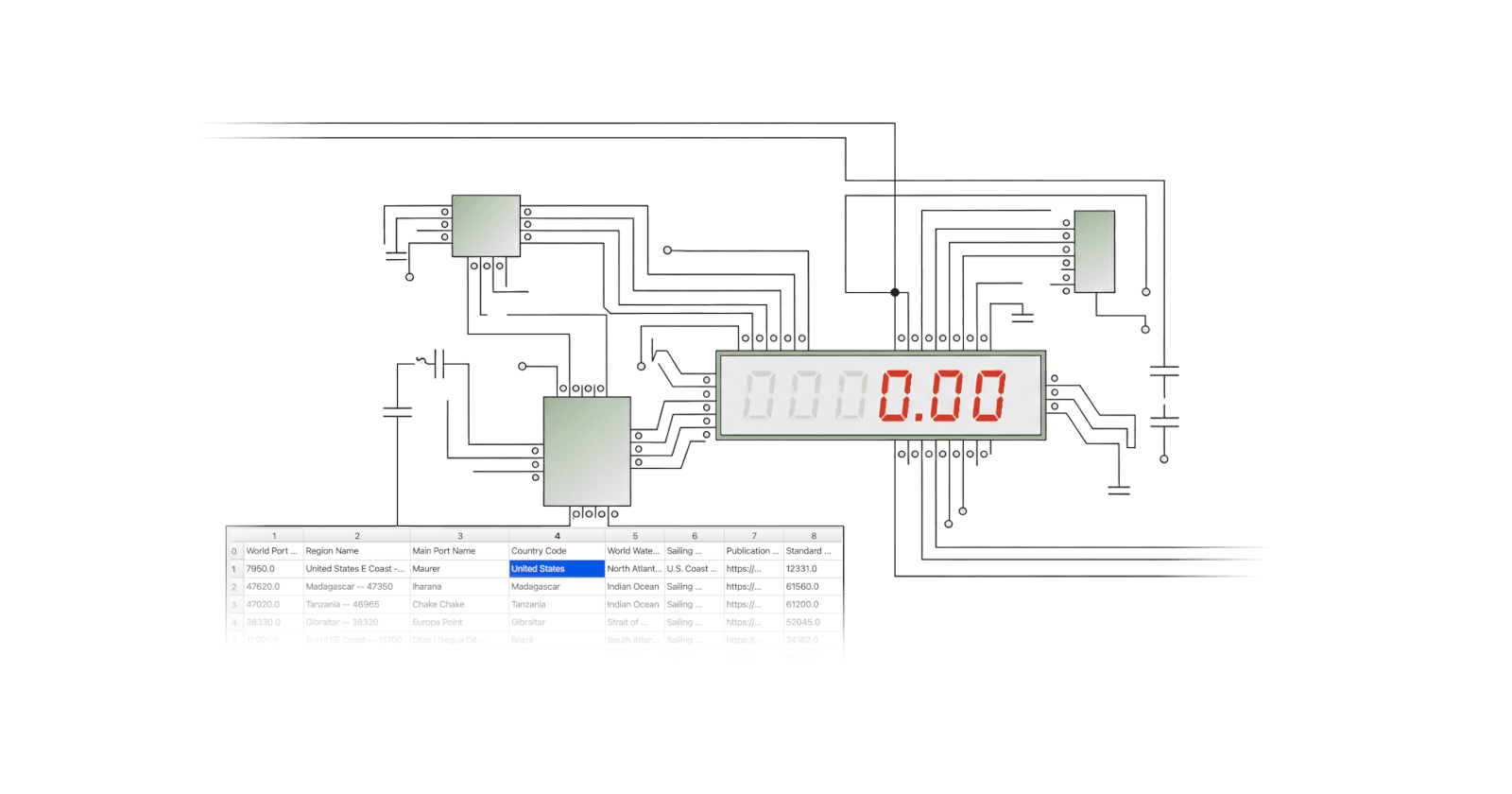

FlightPath is the frontend for the open source CsvPath Framework. It is a data preboarding tool. What is data preboarding? I’m glad you asked!

The first step is ingesting new knowledge

Data preboarding is a more specific term for ingestion or onboarding. Basically, getting new raw data into the enterprise takes two steps, at a high-level: preboarding and loading. The preboarding step is a clarifying shift-left of activity that unfortunately often happens after data is made available in the data lake or data warehouse:

Registering the data with durable identity in an immutable staging area

Validating and upgrading it in an idempotent way to ideal-form raw data

Generating metadata for full explainability and provenance

Publishing the metadata and data in an immutable trusted publisher for downstream consumers

The point being, validation is central to the preboarding concern. And that’s where the conundrum is. If LLMs can extract data so well, what is the point of parsers and NLP, or (my present concern!) a solid preboarding architecture? Anthropic would have have you simply point Claude at a pile of unstructured text and create CSVs, or at a pile of CSVs and create knowledge and insight, and… profit!

My feeling is that there are two types of tools in this corner of the world. To over simplify: those that are inherently lossy and those that, in principle, can never be wrong. As you would imagine, I believe the LLMs can and should eat up essentially all the use cases for lossy tools. And, conversely, I would never (or at least never for the next few years) let an AI attempt to muscle in on handling the never-get-it-wrong use cases.

The thing with the classified ads is straightforward. The LLM gave me 90% correct data on the admittedly small trial I did. The tools I helped build almost 10 years ago would do well to get into the 70% range, downhill with the wind. And that was considered Ok.

70% was a lot higher than 0% and required just milliseconds to do. Without the parser you’d be cutting and pasting or re-keying. Neither alternative was tenable. The expectation was that the data would be pretty ugly. And there was an acceptance that people would never be satisfied. So long as people paid for the data in the end, it didn’t matter about the sausage-making.

But now the sausage-making is gone. Something like 40 FTE * $50,000 (average; we were a global company) in product development salaries alone goes away. The output gets to 90% correct. Time to market dives into the floor. And the main concern now is the performance of an API that you don’t have to run yourself, unlike the old API that was equally performance-challenged but you did have to run yourself. Crazy! Crazy awesome.

Sometimes kinda-sorta is not Ok

But that’s just the lossy side. When a much-beloved AI — that shall remain nameless — recently got into an out and out disagreement with me as to if Boston is the capital of Massachusetts, I found myself rolling my eyes and laughing. (For the record, it is). When I’m looking at my tax returns, health medical record, or credit card statement I am decidedly not in a lossy mood and I’m not laughing. If we’re talking about generating candidate insights from a million anonymized records, sure, precision isn’t the issue. But when we care specifically about 1 record, it manifestly is the issue.

The world is full of data that should be immutable, idempotent, deterministic, and explainable. It should be specified in detail and held to spec rigorously at each stage of a well understood lifecycle and journey. This is where, when the data is in CSV or Excel, CsvPath Framework is the right tool for the job. Obviously LLM AIs cannot perform acceptably in that world. By their design, in those cases, they are not the right tools for the job.

So is there no value to LLMs in a world of predictable precision where Things Must Be Correct? Not at all. LLMs are great at generalizing the high-dimensional predictions that go into creating forward-looking rules based on past results. If you settle on a highly structured form of data constraint definition for the LLM to use, even better. As many of us know, LLMs are good at predicting acceptable code for specific cases. The limitations of structured language reduce the opportunities for LLMs to give results of varying quality. And the limitation helps engineers nudge, refactor, and test the code into production form efficiently.

Crystallize an LLM prediction in code and you have productivity with protection. This is essentially the best of both worlds. An LLM can write candidate rules for CSV or other data processing chore quickly based on examples and sample data. A data engineer can quickly see if the LLM is talking trash or spot on. If the former adjust, or ask the question another way. And at runtime, the results of executing a rule or schema against actual data is deterministic and provably correct.

All that said, how do I feel about products that purport to directly use LLMs to create data excellence? Mixed. For certain use cases, it’s a no-brainer. For others, not so much.

In fact, LLM data munging is not where FlightPath and CsvPath Framework live. We deal in precision and predictability at scale. But still, for many purposes where data outcomes can be approximate or inspired, LLMs are a perfect fit. And similarly for generating rules and schemas based on sample data we find LLMs are great users of CsvPath Validation Language. Just so long as their work can be crystallized in well-tested rules — we’re all about that!