Box == Pony == ETL. Really?

A conversation with a talented quadruped about an ETL box

I had two unusual but illuminating conversations in the past few days about with engineers about CsvPath Framework and data preboarding. One of them made me do a double-take. You'll like that one, I promise. The other conversation was less surprising, less amusing, and followed a common fallacy.

Where Does Governance Begin?

In many companies, data is the most critical asset. When do you start protecting your assets? A what point do you start to care if the input that determines your success in the market is actually under control and at a sufficient level of quality?

In many cases, perhaps most cases, data file preboarding is handled within or after data is ETLed into production databases. That means files are accepted unconsidered and unregistered and immediately pushed into a new context where their data is mixed with production data and hard to trace. Why do we do that? Largely because it’s the path of least resistance.

The point is, we weld ETL to the edge of our data estate because we have a convenient tool that allows us to skimp on pre-work. Just load and go. The illusion of speed with the surety of the roulette wheel.

Who Does Governance Begin With?

Here's what one engineer recently said to me:

There is nothing wrong with your framework, but I'm really just a one-trick pony. I have developed a robust generic T-SQL process that imports, processes, and stores CSV/Excel data. It's just a pure T-SQL ETL engine with no front-end and no other technologies

On one hand, I love this! He's got a successful tool and don't-fix-it-if-it-ain't-broke attitude. Moreover, T-SQL qualifies as "boring technology", which is often the thing you want. Boring is tested, bulletproof, and widespread for a reason. I might not question the tech choice here, even if they definitely wouldn't be mine.

But on the other hand, so many things! Setting aside the questionable self-appellation "one trick pony", there's a lot to be concerned about. Apparently his tool has been used over and over. That fits our observation that a lot of companies have a preboarding approach that is simplistic. A lot of companies!

The question is, if the data looks right, does it matter if it isn't? And if it matters, does it matter that there is no paper trail to show where the process went wrong? You know my answer.

How Unitary Is Data Loading, Really?

Is loading data a unit of work? Maybe. My second conversation this week didn't land any classic know-thyself quotes about talented quadrupeds. Instead I got stuck on a box. Possibly a soap box.

The box was on a data flow diagram that showed how a file feed was received and processed through steps into an analytics database. There were several steps and four or five systems. Multiple steps were in a layered data lake. The usual stuff, joining, selecting, mastering, etc. But I got stuck on the first one because it was sourced from a cloud and pushed to a data lake and was labeled ETL.

One box at the edge doing one unit of work to get the lovely datas from outside the enterprise parameter into the data lake. We put more layers between a browser and a webapp, even though each browser request typically has virtually no impact on the enterprise as a whole. Ok, bad analogy, but still. Each time this ETL box lights up it presumably has the potential to cause real havoc downstream.

This is the golden-hammer problem combined with the feeling that if the sky has never fallen on my own head, it probably isn't ever going to fall. When the requirement is to move some data, it is just too easy to grab the ETL tool at-hand — be it T-SQL, Informatica, Glue, Airbyte, or a notebook — and move the darn data so you can close the ticket. Too easy + too unsafe == too risky and too slow.

We Need Better Requirements!

The reality is, that requirement is wrong or at least incomplete. In virtually every case of handling a data file feed we need to deliver a few things.

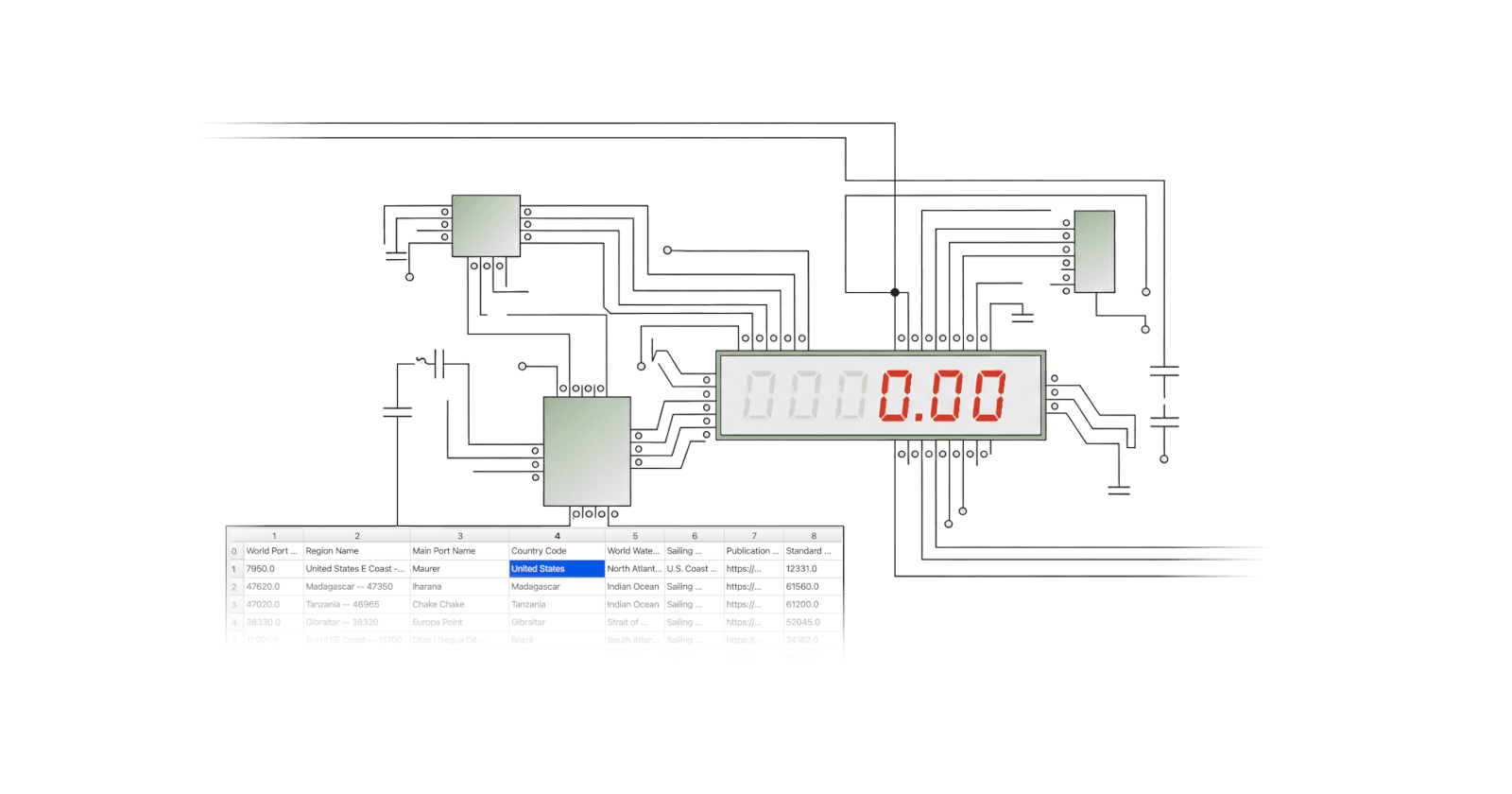

| Need | How | Why |

| Provenance | We capture when a file arrived, from where, how, with what name, to what location | We do this so we know who to call if there are problems and how identify what we received so they can find a solution and resubmit the data. |

| Identification | Preboarding registers data in its arrival form giving the specific bytes an identity that is durable throughout the data lifecycle. | We do this so there is no confusion about the start of the data lineage and no breaks in the lineage as the data progresses. Without an identity two files labeled March-2025-invoices.csv with different content due to restatements or fixes cannot be distinguished, making forensics nearly impossible |

| Immutable staging | When a file lands it is registered under an identity and moved to a unique location in immutable staging | Immutable data is data that can be recovered, replayed, and reasoned about. There is no hesitation about updating software because the data is always available to recover to. Forensics are easy because we identify bytes that never change due to copy-on-write semantics. Moreover, copy-on-write is inherently easier to program, so more agile, higher quality, and more debuggable. |

| Validation | Preboarding has validation as a core delivery. | We validate in preboarding in order to do two things: 1) to raise quality, and 2) to reduce manual effort, and thereby raise agility and lower costs. |

| Idempotent upgrading | As we validate we have an option to upgrade fields and field values. Ideally we do upgrading in an idempotent way, enabled by data immutability, that allows us to rewind/replay when requirements change or problems are found. | For data to be useful the individual datum must be comparable and Interpretable. Preboarding offers the opportunity to canonicalize fields and values as the validation happens. While not validating is unlikely and counterproductive, upgrading is entirely optional, and may boost productivity. |

| Lifecycle events | Preboarding throws off metadata events about states and transitions. | You cannot manage what you don’t measure. We want monitoring and alerting on the data, separate from the monitoring and alerting we have on the OS, database, and applications. The preboarding system should log events like validation errors, validation stages, lifecycle changes, etc. That way we can monitor the data operations, not just the data operations machinery. |

| Metadata collection | All the steps above and more are captured to a metadata store for future reference. Lineage, validations, the who, what, when, where, etc. of the data preboarding lifecycle. | Without capturing virtually every detail of the preboarding process we lose information that we might want during triage and forensics down the road. Moreover, we want to provide downstream data consumers all the details and assurance they need to trust the data they pull from the preboarding archive. |

| Archival publishing | The output of preboarding is pristine raw-form data, along with metadata explaining the source, journey, and current state, in an immutable archive queryable from downstream. | We need immutability in this last step just as much as in the first. The downstream consumer needs to be able to find, interpret, trust, and access every version of every data set quickly and easily. |

CsvPath Framework was built specifically to do all of these things precisely because these are the things we’ve been bitten by over and over, collectively at many companies.

What’s In the Box?

That ↑ is a lot for one box to carry. When I see a single box or one-trick pony standing in for robust data preboarding my hair starts to smoulder and the gears grind as I try to imagine all of preboarding fitting in one little box. When I point to the box and ask, often I get a blank look or — and I may be imagining this — the suspicion that I’m over-complicating things.

But here’s the thing, unless you live in a world where all data partners are trustable and mistakes are never made, you’re going to have to spend effort on this stuff. It’s a pay me now, or pay me later thing, but there’s no escaping the doing-the-whole-job tax, unless you have a special circumstance I’m not eligible for that makes fixing problems not your problem. If that’s not the case, open the box. Check inside. There must be a pony in there somewhere.

Then come find me. I’ve got a pre-built preboarding tool your pony can pull into production pronto.