Collect, Store, Validate, Publish

Is your ingestion built on this data preboarding pattern?

For many companies, ingesting data is a foundational risk. Think about it, if your business is built on collected data what happens when you fail to control the raw data feeds? Garbage in, garbage out. And who wants to be selling garbage? For those companies, getting ingestion right is existential.

Those of us who build systems based on data aggregation, processing, analytics, and/or monetization channels know you need a well-thought out architecture. For delimited file-based systems, the architecture to beat is called Collect, Store, Validate, Publish — or CSVP for short.

CsvPath Framework provides a prebuilt preboarding process. It acts as the trusted publisher to your data lake, data warehouse, and applications. You can use the framework in many ways — it is flexible, but opinionated.

CsvPath is narrowly focused on one problem: the trillion-dollar challenge of data file ingestion. It tackles the problem using the a single best-practice pattern — CSVP. That means it is quick to apply, broadly applicable, and consistent. If you have a data ingestion problem, that’s what you want to hear!

What Is Collect, Store, Validate, Publish?

Design patterns make it easy to reuse proven approaches and communicate about designs. The Collect, Store, Validate, Publish pattern publishes known-good raw data to downstream consumers. It fills the gap between MFT (managed file transfer) and the typical data lake architecture.

CSVP controls how files enter the organization. It is also applicable to any boundary where flat-files migrate from team to team. The point where a files stop being data in-flight and become data-at-rest. That is the point where data is typically loaded into a data lake. You don’t want to be throwing garbage into a lake!

Architecture requirements

Data preboarding is about control. Control has a few fairly obvious requirements:

File landing

Data registration

Validation

Upgrading

Lineage metadata production

Archiving

Let's break them down.

File landing

When you receive files you need to put them somewhere. A CSVP system versions files because they may change over time. In this way, data becomes immutable. From file landing forward, CSVP uses copy-on-write to make operations safe and repeatable. The staging area creates a naming structure that promotes findability. Access to the staging area must allow queries based on version, name, order of arrival, and date. These queries serve as reference for each CSVP activity and downstream data consumers.

Registration

Files require a clear identity that follows the data they contain. Data needs a birth certificate and a social security number. This is the beginning of the data's lineage. The identity must be durable across changes and specific enough to identify versions of like data.

Validation

Data validation checks that data is well-formed, valid against a schema, in canonical form, and correct with regards to business rules. Data preboarding is a way of doing a data quality shift-left. The earlier you can fail bad data the easier and cheaper it is to correct it. Validation done right minimizes manual checking and speeds up delivery. Since manual checking and triaging bad data found by data consumers can eat up to 50% of data operations time, validation is a critical component of CSVP.

Data upgrading

Data partners frequently send data that does not conform to expectations. Likewise, some data comes as expected but in a form that varies from inhouse data. Often these inconsistencies can be easily fixed by small changes. For example, a field for the name of a month may require three characters, but in the case of one data provider always receive September as “Sept”. A small difference like this can be fixed, resulting in upgraded data.

Lineage metadata

Data lineage tracks data by durable identity as it moves from system to system and changes over time. Lineage includes version, operator, validation and upgrading scripts, timing, sequence of runs, and other indicators. These indicators explain exactly how each data artifact was created. Ultimately, downstream data consumers should be able to easily trace any unexpected data step-by-step back to where it entered the organization. This traceability makes triage efficient and actionable.

Archiving

The final step of CSVP is publish the data and metadata to an immutable permanent archive. This is where downstream consumers find their data. CSVP’s querying capability allow consumers to use data references as their sources. These references are specific, consistent, and descriptive in a way that simple file system paths or URLs cannot match.

Why do we call CSVP a Trusted Publisher?

When we talk about downstream data consumers we’re primarily talking about the data lake, data warehouse, and/or applications. These consumers are systems of record. It is vital that they receive known-good data. While some data lakes are managed in a way that progresses data from raw to finished product — as in a Medallion Architecture — that progression should always start with data that is accepted.

Accepting raw data means deciding what ideal-form raw data looks like and verifying each new piece of data against that standard — or rejecting it as early as possible. This is what data preboarding is all about. Using a CSVP architecture to preboard your data requires you to spell out what good looks like. The payoff is two part:

You can scale down expensive, slow, and risky manual processing

You insulate the organization from problematic data and heroic firefighting

Preboarding is required because data partners you don’t control are inherently untrustable. They can and will change their data for internal reasons. Their interpretation and requirements will differ from yours. Their level of investment in their data operations will not match yours. And they will make mistakes you cannot overlook. Moreover, often they won’t tell you when change happens. You have to find out for yourself.

When you preboard your inbound data you are intermediating the preboarding process between data producer and data consumer. From the downstream consumer’s point of view, the preboarding archive becomes the data publisher that they can trust.

How CsvPath Framework does CSVP

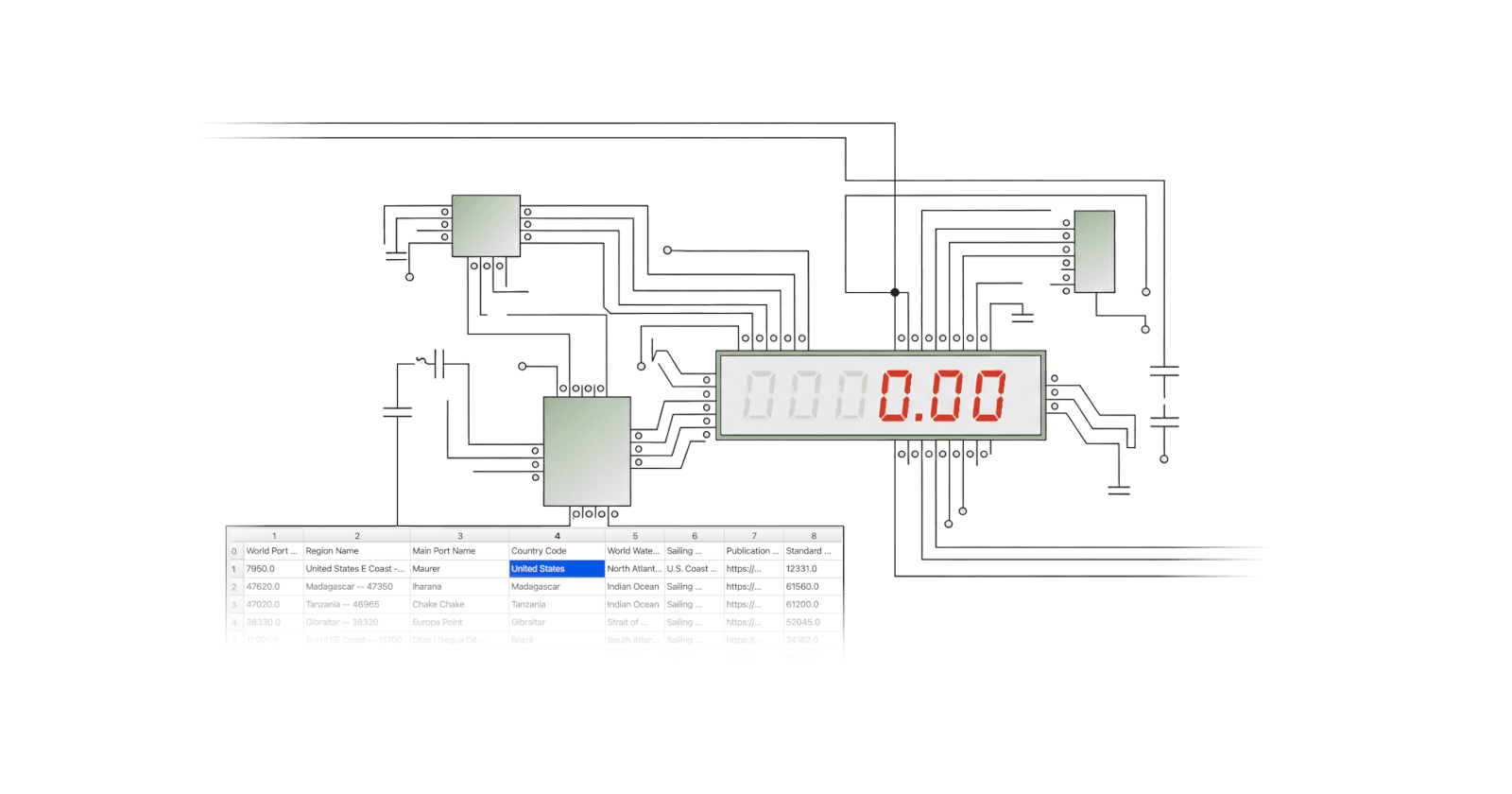

At a high level, the CSV Pattern looks like this picture.

Sophisticated features

The features cover al the bases we laid out above:

Lightweight projects encapsulate difference data partnerships for clarity

Data files are captured and maintained in an immutable, versioned staging area

Processes are simple, linear, and consistent across data partners

Validation is checked for well-formedness, validity, canonicalization, and correctness

Processing is idempotent, using copy-on-write semantics so data is never lost or untraceable

Rewind and replay allow for data fixes with confidence and without restarting from scratch

Metadata for provenance, lineage, and validity is captured at every step

Results are published as “ideal-form” raw data in an immutable permanent archive

If you read this and think: how else would you do it? that is good! CSVP is an intuitive approach to controlling data ingestion. It helps make data preboarding a distinct stage with a focused, high-value goal.

In practice, though, in many companies the pattern is not this clear and intentional. In fact, in many companies the pattern isn't used consistently across data partners. Of course, no pattern can cover every possible situation. We believe the CSV Pattern covers the majority of transaction-oriented, many-party, file-based, loose-integration situations — particularly those where one or more of the parties is technically weak for any reason.

A drop-in design

CsvPath Framework provides CSVP architecture in a prebuilt, pre-integrated package. It is multi-cloud ready and setup for observability using any OTLP platform (e.g. Grafana) or OpenLineage collector (e.g. Marquez) out of the box. The full CSVP implementation comes in three components:

CsvPath Framework is a Python library distributed on PyPi a complete programmatic CSVP solution

FlightPath Data is a Windows or MacOS app distributed on the Microsoft and Apple stores

FlightPath Server is an API for upstream and downstream systems, distributed on WinGet and Brew

The components are all open source and available on GitHub.

In every way possible, CsvPath Framework works to be a drop-in replacement for a less developed system or a full solution for a greenfield situation.

Learn more about CsvPath Framework

For more details about how CsvPath Framework implements the Collect, Store, Validate, Publish Architecture, hop over to the documentation. For information about FlightPath Data checkout https://www.flightpathdata.com.