Ingestion, ETL, onboarding, and preboarding

If you're not clear on these terms your data will suffer!

How many data partners do you work with day-to-day, week-to-week? Most companies exchange bulk data with more parties then they think. Payroll, orders, marketing automation, regulatory filings, inventory, web traffic and many more activities all have the potential to periodically or regularly require data exchange. Information services companies, science organizations, service bureaus, and managed services partners, so much the more so.

Every time we want to make one of these data exchanges happen we have to pick an approach and a standard. Not infrequently, the approach is automated file transfer and the standard is CSV or Excel over HTTPS. The next topic is how do we get this data into our application, analytics, or AI? Get it right and business hums along happily, and evenings and weekends are, well, stay evenings and weekends. Get it wrong and there’s a strong potential for all hell to break loose.

Onboarding as an end state

The purpose of collecting data you didn’t make is to perform transactions, make decisions, or sell it. To over simplify, that all happens after the data is onboarded into an application, analytics tool, or AI. From that point of view, onboarding is basically an end state in user space. At the point data has been onboarded it is useable and positioned for use.

The data’s journey to get to that onboarding is, of course, much longer than that last hop. It has to be ingested to a raw ready state and prepared for use. Typically that takes a bit of effort. Those two steps are data preboarding and ETL, or assembly (to reach for a slightly more general term).

The problem is… impatience

Three steps between you and anything is two steps too many, am I right? However, in the case of inbound data, impatience is a killer. Too many times we see data entering the organization essentially at the assembly step. I.e., dropped right in the data lake or immediately ETLed somewhere. In organizations that have the time and talent to create their own applications, we see cases where data comes in and is immediately taken by an application without either of the prior two steps. Both of these shortcuts are ultimately problematic.

When an application pulls in data before the data passes through an assembly step there are a few possible problems. One is that the information becomes proprietary to that application, making its assembly into any other context more of a project. Another problem is that the opportunity for unified data governance is lost.

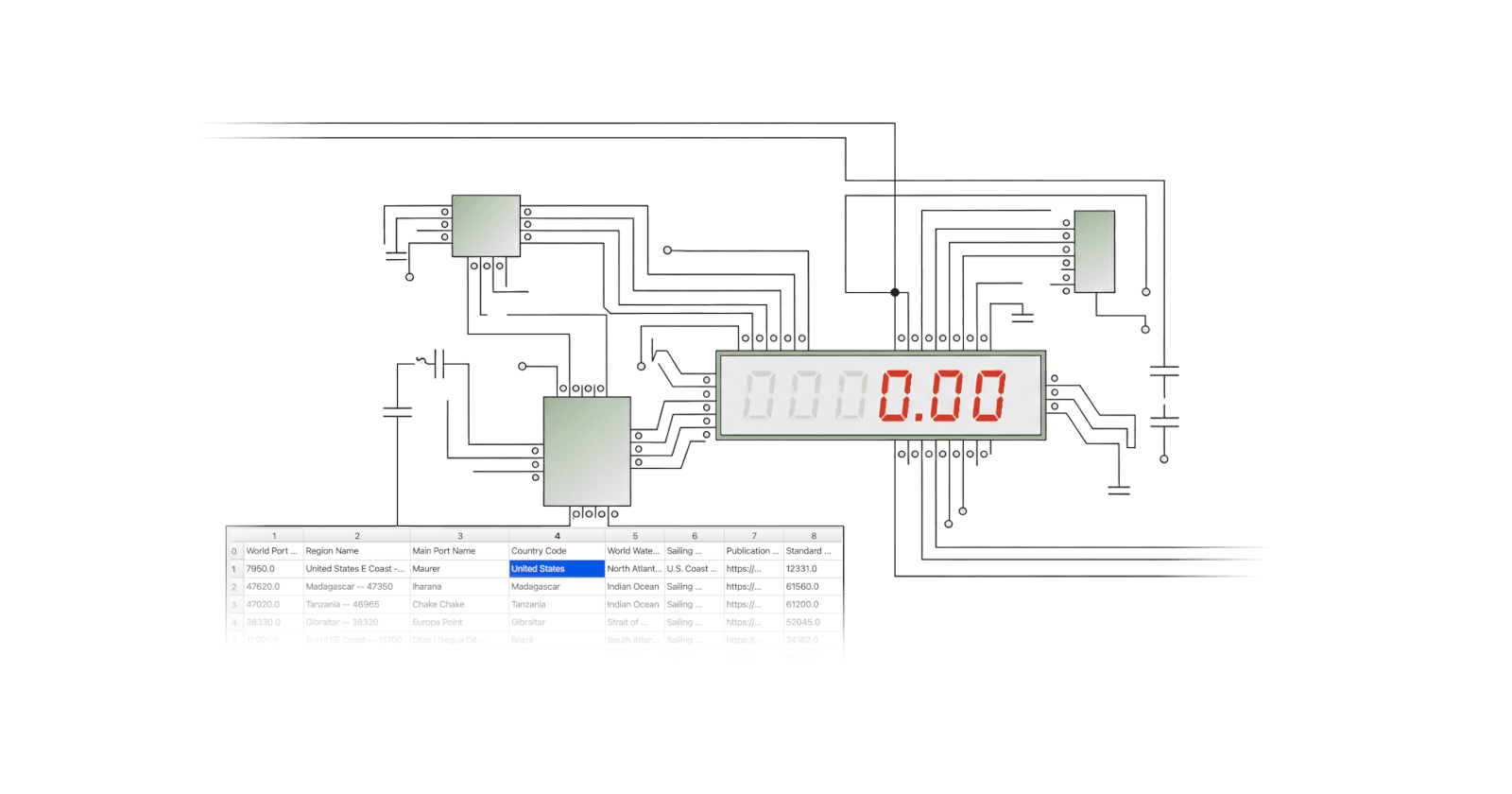

However, the biggest problem when data drops unconsidered into the application or the assembly stage is that the benefits and guarantees of preboarding are lost. Do you know exactly when you received the data, in what version, from which source through what channel, and with what corrections? Did it validate? Did it load cleanly? How many times did the job run and who ran it? Where is it stored for posterity?

Now, you can do preboarding many ways. And it can be said that if received data is accepted for loading to anywhere, then it has passed preboarding. By that measure, if a file is ETLed successfully into a database you could say it has been preboarded as well as loaded. But has it been, really? You can put milk in a bottle and sell it, but that doesn’t necessarily mean it has been pasteurized.

The value of data preboarding

Preboarding is the process of taking raw unknown data and turning it into stable, well-known, and trustworthy raw data. Is that just make-work? Ask anyone who has lost track of a version of a file worth tens of thousands of dollars. Or who missed a filing deadline because it wasn’t clear which files came from what source on what day. Your AI may be happy to wait all day for you to finish a conversation about the employment numbers, but you’re not going to be happy if it quotes you the unrevised numbers from Q1 in Q3. Even less if they combine Paris, TX with Paris, France. Uncertainty has consequences. And these things happen a lot.

In bad cases, up to 50% of a combined data engineering and business operations team’s time may be lost to firefighting data problems that get into the assembly stage or that are onboarded into applications, analytics, or AI. A 10+% firefighting load on every million dollars in data feed-tied revenue is, in many companies, seen as perfectly normal. And those are the average, or even above average, companies. That 10% to 50% comes directly out of profits and often grows linearly, if not managed down.

The answer is straightforward, don’t be impatient, be methodical. Progressing inbound data step-by-step from preboarding to assembly to onboarding may feel slower, but, as the SEALs say, slow is smooth and smooth is fast. (Just agree — you don’t want to mess with SEALs). And remember, zoomed out enough, setup is essentially a 1x, operations is an Nx.

Taking a methodical approach to ingesting data is also not expensive by definition. There are always options for spending a ton of money and time on anything. But good open source tools exist (you’re reading the blog of one of them!) and the goal of preboarding is so cut, dried, and linear that the right answer is hard to miss. If you choose CsvPath Framework for your tabular files-based preboarding, the architectural pondering you’ll do approaches zero because the Framework was built to do exactly what you need. Add the FlightPath Data frontend and it’s even easier.

Hopefully, after all is said and done, your team is yearning for a more methodical and less manual approach. Preboarding gives you one. They will for sure appreciate less heroic firefighting. And your data will certainly thank you.