The Bermuda Triangle Of Data

A story of data preboarding disaster on the high seas

Let me tell you about a data ingestion problem that was incurred due to a faulty preboarding process. I'm changing a few details but this is basically how it happened. Ultimately the teams got through it. Their data preboarding process got better. They lived to ingest another day.

The company

The company was an information services provider. A step up from a mere data broker, instead of selling raw data, they processed it into information and provided actions and analytics. The architecture was that of a vertical search engine. A vertically integrated mini-Google focused on one industry. They gathered raw data, processed it using bespoke NLP and ML, loaded it into a search engine of their own design, and provided rules- and AI-based insights on the search results. Like RAG, but with an inverted tree, rather than a vector db. Cool stuff.

And it was definitely a garbage-in-garbage-out situation.

The business

Let's call the company ShipmentInsights. They are without question the market leader in their niche.

ShipmentInsights’s customers accessed data and actionable insights about the shipping world through a search portal. A customer seeking an advantage in their market would assess rival shipping companies or insurance providers or shipping consumers to find arbitrage and patterns of activity that missed profitable opportunities. AI-based pattern-matching in a unique data set was the secret sauce that let ShipmentInsights find market imperfections.

Needless to say, selling a solution to imperfection raises the bar on your own internal processes.

The process

ShipmentInsights collected data in bulk. They received import/export data from ports, shipping companies, industry news outlets, catalogs and marketplaces, and brokers of various shipping-related goods and services. When the data arrived it would be parsed by the company's NLP, annotated, stored in multiple states of analysis.

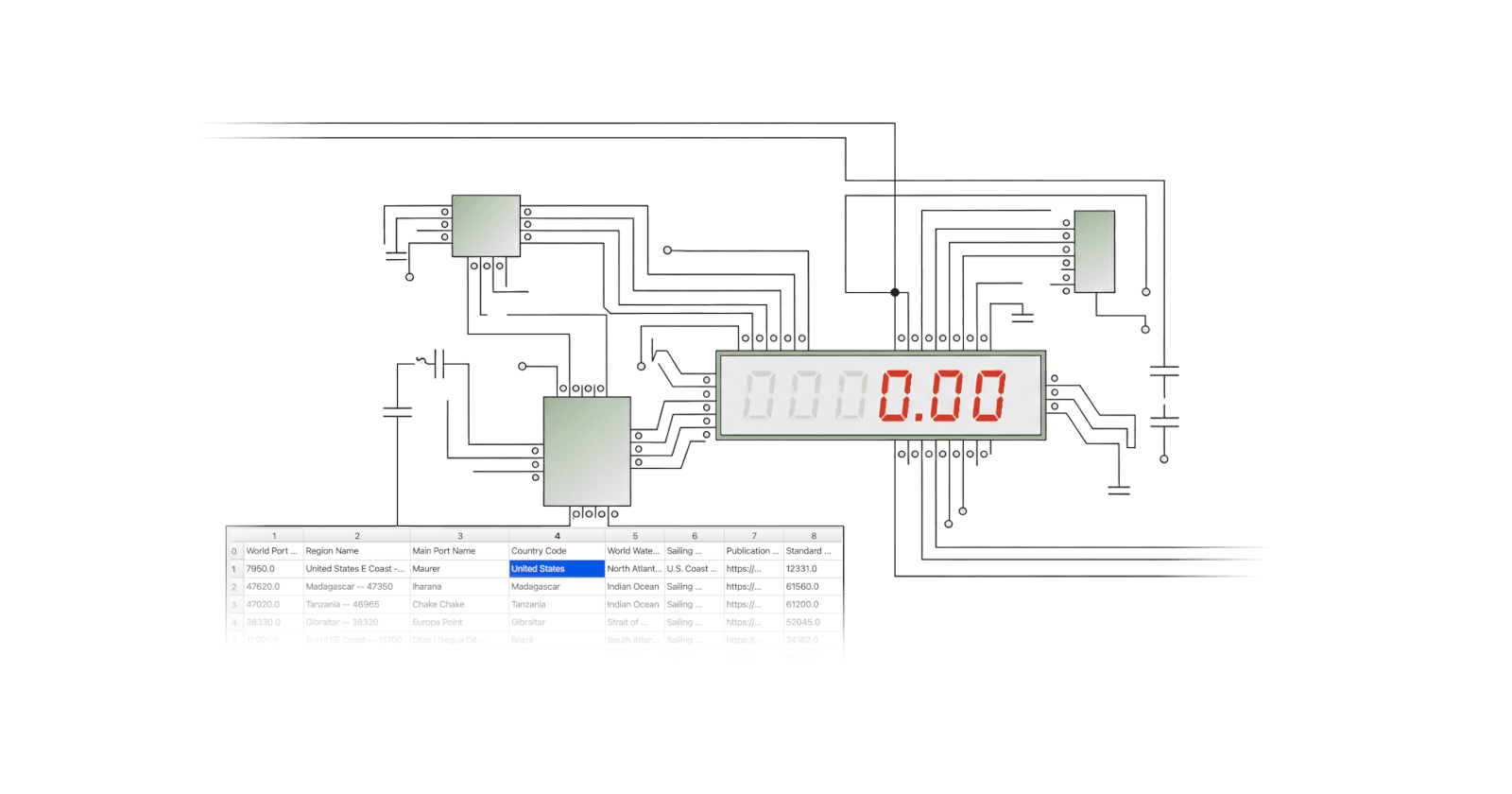

Most information came in monthly files, some came weekly. It was rare for the data to come via API and the preference was for batches. Most data arrived as CSV files. Some as Excel. And a few sources used XML. All the data was stored in a data lake. Then through a many-step process it was upgraded and converted to tabular form and loaded into a data warehouse. Much of the upgrading process was manual or and quality control was likewise manual. The process required teams on three continents.

Whoops, where's my data?

The problem presented itself in the form of a puzzled and worried call to ShipmentInsights support. The customer was one of the big fashion houses, let's call them Couture Du Sol. Couture had seen a spike in their shipping costs that didn't make sense. It was coupled with routing delays that put their fall collection shipment deadlines in question. That the resulting problem was based on ShipmentInsights's data was easy to see and hard to argue with. The data was wrong.

Unfortunately for ShipmentInsights, Couture Du Sol was their largest customer, representing 4% of revenues. They wanted answers. And they wanted to know the details. How else could they trust the data going forward?

As you can imagine, multiple teams jumped into gear.

Almost three weeks later

It took a lot of digging. Not only were there in excess of 500 data feeds, there were three distinct spheres of data operations: US, EMEA, and Asia, each with their own DataOps and Operations teams. Each was distinctly different. The data flow diagrams were spaghetti. ShipmentInsights was not a young company. These systems had been manufacturing money for two decades.

Each data source was a partner, a vendor, or a passive public source harvested by ShipmentInsights systems. And each one had unique elements in its data flow, even though many feeds had much in common with one another. Each data file landed in a landing zone that was shared with other feeds. These landing zones were a bit crufty and inconsistent metadata was produced. More importantly, each region had its own validation and upgrading strategies.

Data flows were worked on by human quality checkers, labelers, and cross referencers. The manual work was done in bespoke tools that varied region to region. Some data was keyed. That keying and other low skill manual work was farmed out to a team in the lowest cost geo the company could find, but at the expense of more coordination costs and process complexity.

Long story short, The Data, I presume

Like Livingston, the data was eventually and conclusively found. During the process of understanding the brittle points in how data was brought into ShipmentInsights it became clear that multiple day's data had gone missing. At first it was thought to have been lost in the Indian Ocean. Ultimately, though it became clear that the missing data went off radar over the Atlantic.

Critical parts of four non-contiguous days of production data updates had not been loaded. Frustratingly, the missing days were spread over three years prior to the complaint and had rippled forward in a cascade that happened to snare the largest and most demanding customer. Ain't that always the way?

How it happened

The US team's data arrived by SFTP on an MFT system. It mostly came by pull, in some cases push, and in a small minority by an internal process that itself pushed data to the MFT system. The files landed in a single landing zone that was bucketed by date and source. Many of the files were sent to the lower cost team for basic clean up. Those files arrived back in the landing zone, again over SFTP, in a slightly different place. A somewhat higher value processing workflow took over from that point. That workflow resulted in updated files and those files were loaded into a staging area of the data warehouse. From there the data was shared to EMEA and Asia. In the case of EMEA, the sharing happened as an export shipped over a message queue.

The message queue was approximately over the Bermuda Triangle. Data went missing.

Why did it take so long to come to light?

The biggest problem wasn’t that the preboarding was convoluted and manual. It turned out to be mainly a problem of data identity and manifesting.

The source data lacked clear identity and consistent metadata

The data was mutable and versions were poorly tracked

Data published internally was not cataloged in a way that was easy to monitor and cross check

In short, the DataOps team publishing data to EMEA was acting like a rough data aggregator but treated by EMEA as a trusted publisher. Nobody questioned their assumptions, but even if they had, what would they have checked to make sure EMEA got the goods advertised?

On top of that, yes, the preboarding was also often manual and convoluted. I.e. expensive and risky.

How would better preboarding help?

ShipmentInsights's clearly had a preboarding process. In fact, more than three of them. Regrettably, all imperfect.

Better preboarding would have helped ShipmentInsights with their specific ingestion problem in several ways:

Data would be captured from incoming file feeds in a consistent way, across the board

Each file, and each version of each file, would be identified clearly with a durable ID that carried downstream

Data would be managed immutably, so every data file is always exactly what you expect it to be

Hand-offs between geos would be handled consistently, much the same as hand-overs from external data partners

The known-good raw data would be presented with its lineage in a permanent published data archive for easy cross-checking

These improvements to ShipmentInsights's preboarding process would have simplified and clarified the data flow. Ideally to the point that Couture Du Sol would not have had the problem they did. Moreover, were such a problem to surface, a consistent and well designed preboarding architecture would be quick to review, not a two to three week slog by many people looking under rocks for unknown problems in an unfamiliar process.

DataOps teams spend up to 50% of their time firefighting. This thumbnail sketch of one preboarding disaster helps explain why.

Hooray, problem solved

With better data preboarding, ShipmentInsights could have protected that 4% of revenue. They could have scaled down their on-going investment in error-prone manual processing. And their data could have had a shorter and more agile path to market that would have allowed more hands to do more high-value product dev and customer solutions work. All while customers were not complaining.

Sounds nice, right? ShipmentInsights thought so too. They put building a new preboarding process out to bid. Cognizant, EPAM, IBM and others made proposals. At the time it didn't go anywhere. The reason? Back then, nobody knew what good looked like. Everyone ShipmentInsights asked agreed finding out what good looked like would cost the sun, the moon, and the stars. That slowed things down, for sure.

Today we no longer have that problem.

CsvPath Framework is what good data preboarding looks like. It is the preboarding process that should have protected Couture Du Sol and made ShipmentInsights business markedly more profitable. And CsvPath Framework, along with its FlightPath automation server, is open source. You can get the benefits of a purpose-built preboarding architecture at any scale with no licensing overhead to weigh you down.

Take a look at CsvPath Framework and compare your challenges to what I've described. Think about the possibilities of a more robust ingestion using a solid data preboarding approach. And stop shipping data through the Bermuda Triangle.