We Hold These Truths. Data Preboarding Isn't Enough.

Productivity is also a factor. Let's get into it.

Let’s look at a real data file feed preboarding system to understand how easily you can improve the preboarding stage of your data pipelines.

Better preboarding has a potentially huge productivity boost from eliminating manual data review. It also generates impressive productivity returns from cutting out data-fail firefighting. However, the automation economics need to pencil out. It matters what tools you use for preboarding. Spoiler alert, we think CsvPath Framework comes out looking very good in this comparison.

All these steps should be preboarding requirements:

Immutable staging

Durable data set identification

Validation and upgrading

Descriptive and lineage metadata

Immutable, queryable publishing to downstream

Integration with existing data infrastructure

All that would take a lot of person-hours to build from scratch. Believe you me; we did it, so we know. Moreover, you have to create the validation and upgrading scripts. Those are the beating heart of the push to reduce manual processes. If those scripts are hard to create, it’s hard to make a case for changing business as usual — even if the costs of business as usual are too high.

How hard is it to govern, really?

The federal government (in the US) does preboarding a lot, on a huge scale, and at great cost. The FDA, CDC, DHS, and all the other agencies aggregate their data in https://www.usaspending.gov/ using CSV file submissions. The site is an awesome effort to bring transparency to federal spending. As you can imagine, like the budgets it tracks, it is massive. The infrastructure is an impressive example of preboarding. It is all open source on Github.

To the folks who built this, if you’re out there, your work is awesome. I’m using it just as an illustration in hand-wavy mode, not factually-precise mode.

Most organizations don’t face this scale of data file feed ingestion challenge. Nevertheless, all companies collect data and make sure it is trustworthy, even though it comes from sources with different priorities, levels of technical sophistication, development cycles and SDLCs, etc., etc. Most of us are a microcosm of usaspending.gov, to some degree. And we don’t get it right, money is lost and developers and BizOps teams don’t sleep.

But data quality has to be affordable. The open source CsvPath Framework, and FlightPath products can help make data preboarding attainable, without the engineering effort of usaspending.gov.

How does CsvPath Framework lower the implementation cost?

First, a clear architecture. CsvPath Framework makes doing the right thing easy. Developers, architects, and BizOps people don’t sit in conference rooms trying to decide what to build. That saves time, opportunity cost, and money spent on consultants and coffee and bagels.

Second, implementing data validation rules using CsvPath Validation Language is often simpler than doing the same validations using SQL or Excel macros. Once you get started it can move quickly while being more understandable for everyone involved.

Third, CsvPath Framework often dovetails neatly with the way your data file feeds are handled today. It can be setup to consume and produce almost exactly the same filesystem directory structures and filenames, so other steps in the delivery chain may need only minor tweaks.

Of these three, the business rules automation simply has to be productive. Without that, the manual investment continues, even if other benefits of preboarding are realized. So let’s get those rules working! Looking at an example preboarding rule from usaspending.gov gives you a good idea of how CsvPath makes implementation easier.

Rubber, meet seriously hot tar

I’ll pause to say it again: this is an illustrative exercise only. I don’t know the details behind usaspending.gov’s business rules. And I don’t know if writing csvpaths would be the perfect answer for this specific system — no solution can possibly be right for every situation. Still, it is a good illustration of why considering CsvPath Framework for your preboarding is a very good idea.

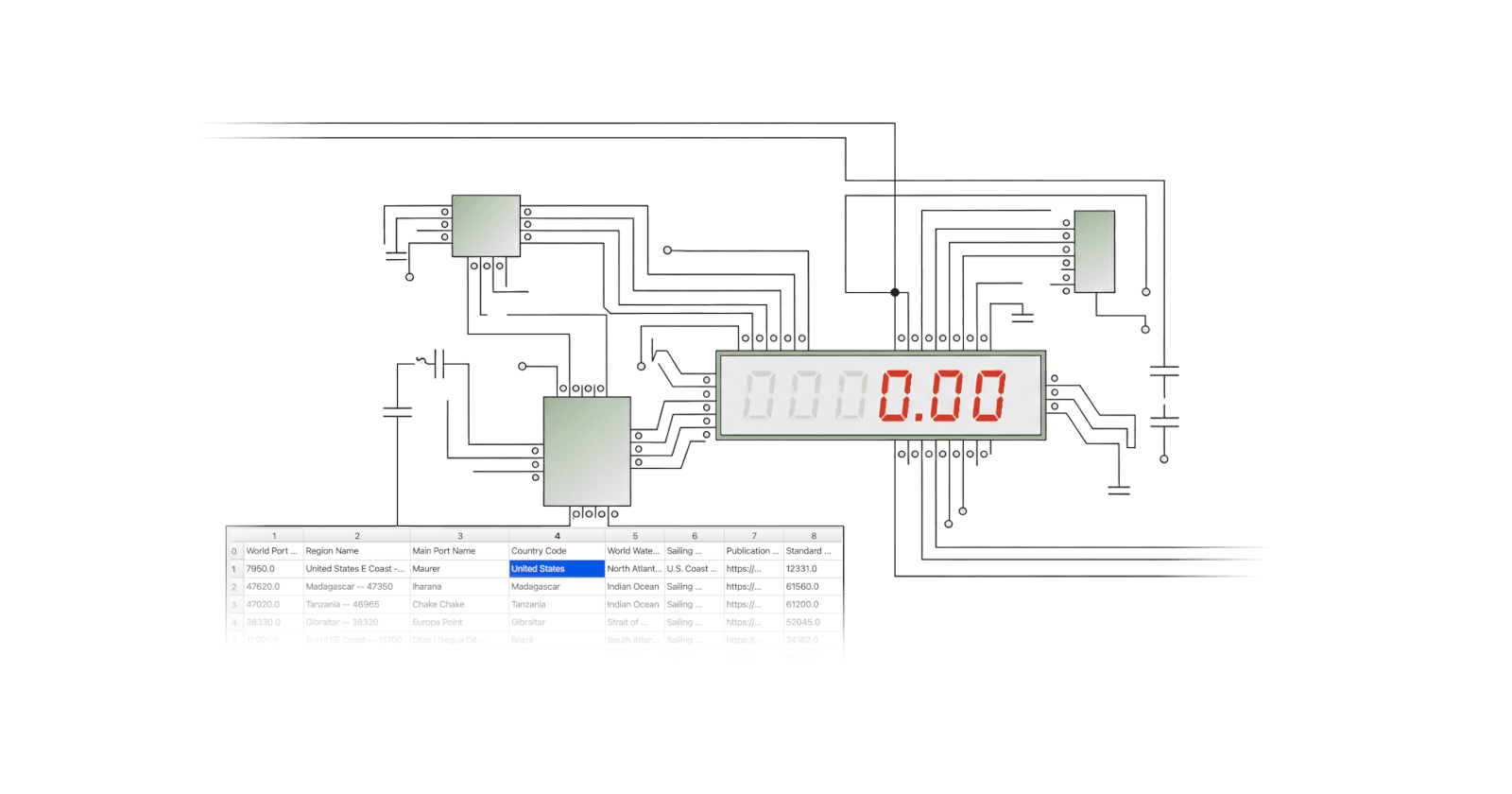

Data comes into the system as CSV files. It is loaded into a database. This is ELT — extract, load, transform. As part of the ELT process the data is identified, staged, and checked. I won’t go into how good the identification is, if the staging is immutable copy-on-write, how raw data can be found, and if its lineage is available, etc. I’m guessing that all checks out. These guys don’t mess around.

In the validation process there are hundreds of rules. They are all written in SQL, as you would expect from the architecture. Picking one at random, rule FABS31.1, let’s have a look. Yep, that’s a lot of SQL.

Now that’s not the most impenetrable SQL in the world; I’ve seen worse. But it’s a lot. Without the rule text I would have to find a developer and spend some time. Undoubtedly, I’d need an SME too. And the SME would probably not be able to pick apart the SQL themselves.

But here’s the thing. That rule text in the comment at the top is pretty understandable. By basically anyone. Plus or minus a few assumptions about what the look-up tables are like, you can probably sketch out how this rule works in about three lines. To wit, the rule text is three lines long.

Here is approximately the same rule in CsvPath Validation Language. It’s sitting in FlightPath Data, the development and ops console for FlightPath Server and CsvPath Framework.

$[*][

not( in( #LegalEntityCountryCode, #countries.variables.foreign_countries) ) -> take()

not(#ActionType == A) -> skip()

before(#ActionDate, date("%b %d, %Y")) -> skip()

none(#AwardeeOrRecipientUEI) -> skip()

not(

and(

in(#AwardeeOrRecipientUEI, $sam.variables.ids),

or(

after(#ActionDate, date("%b %d, %Y")),

between(

#ActionDate,

get($sam.variables.start_dates, #AwardeeOrRecipientUEI),

get($sam.variables.end_dates, #AwardeeOrRecipientUEI)

)

)

)

)

]

The three lines of rule definition text starts the top comment, followed by a five-line implementation note and the name of the rule. All in, 13 lines of comment. The rule itself is only 19 declarative lines. And those lines are easy to read. Compare that to 48 highly technical lines of SQL. I think you’ll see the advantage.

Now we assume a few things

Again, in case I have said it enough, we’re making a lot of assumptions and speculations here. Some of them are:

We assume a look-up list of foreign countries is available

We also assume that SAM registration dates are available for lookup by ID

These are not big asks. The SQL needs them too. We also made some assumptions about the result set required. Using CsvPath’s collect(#header) function to create the line-by-line results we want to keep is simple, but we would need to know more about the shape and naming of the data.

The final assumption is important. Usaspending.gov has a couple hundred quite complex rules. What are the performance parameters and requirements? SQL and CsvPath process data differently. CsvPath is more Spark-like, in that it works row-by-row. It’s hard to compare to SQL without testing, because the processing method is so different.

SQL is fast, but it can have performance bottlenecks. Some queries are molasses. But most queries operate on indexes, caches, and partitioning. It is likely that the SQL query would run faster. How much faster? Would there be a meaningful difference for an automated, lights-out process? Hard to say without more information. This rule is very likely doable. And most of us have much less data than the feds, so we might not care.

And one last question: could the csvpath be even better? Would adding a multi-rule schema be more efficient? What about using breadth-first execution? Could I simplify the logic even more? Answering these questions might have real upside.

Directionally, this is interesting!

Imagine all the benefits for your own data estate. No architectural vision to suss out. No need to build the preboarding solution. An easy fit with existing infrastructure. Up and running first trials in just days. And a simple business validation language that mortals can read, and maybe even write for themselves. Imagine.

Yes, it is a somewhat artificial example, given the number of things we don’t know about usaspending.gov. But, nevertheless, the opportunity is clear. You owe it to yourself, your devs and your BizOps team to consider a simpler preboarding solution: CsvPath Framework and FlightPath Server.

And hopefully the usaspending.gov example reinforces the point that preboarding is important. Uncle Sam wants you to preboard your data — however you choose to do it!