Your Data Lake Is Selling Sketchy Goods

The Retail Analogy For Why You Need Data Preboarding

Consider This Data Ingestion Analogy

Bob the shopkeeper runs an office supply store. He has steady foot traffic in a mall and does well.

Early one day, Bob calls his distributor and orders pens, paperclips, and reams of paper. Shortly, his distributor’s truck pulls up with a delivery. The driver drops six large crates on the dock. Bob signs and the truck drives off.

Bob rips open the boxes and immediately runs all the goods out to the sales floor. He props open the front door and welcomes shoppers into the store.

Now, a question for you:

Has this ever happened?

No, never! Not since goods showed up in horse-drawn carts.

For sure, Bob places an order and the truck comes. But then Bob does something radical — and this is that data analogy. He ingests his delivery methodically by.

Opening the boxes and checking the quantity of goods

Looking to see if there is breakage or incorrect items

Scanning each item as he unpacks the shipment

Putting each item on inventory shelves in date order

Updating the cost basis and pricing in the inventory system

Only after doing all that does Bob select what items should go on the shelf for customers to buy.

Of course he does it that way! How else would you run a successful shop?

By way of analogy, Bob’s delivery handling process is pretty similar to how your organization should take in data from its data partners. Data products are like any other products. They have value and should be handled with care.

Let’s Do Ingestion DataOps Like Bob

If we handled inbound data files like Bob handles retail goods we would:

Collect the data files into immutable versioned storage with clear naming

Give each item of data a unique identifier that is durable through the intake process and beyond

Validate that the files contain the data expected in correct form and in the amount required

Idempotently upgrade any malformed data to its ideal raw-data form

Publish the data and metadata files to an immutable permanent archive available to downstream data consumers

Of course we would do it that way! How else would you run a successful data ingestion operation?

This Is Data Preboarding!

Data preboarding is a method of data ingestion. When you preboard data you do a methodical intake process to allow you to load “ideal-form” raw data into your data lake, data warehouse, or application. The preboarding process makes sure your raw data is trustworthy, under control, and traceable. This is edge data governance that makes a difference!

With the guarantees data preboarding provides, your loading process can focus on the specific needs of each downstream data consumer. Data consumers need joins, splits, mastering, schema mapping, format transformations, parallel processing, multiple system loading, aggregation and summation, and a host of other business requirement steps. What data consumers don’t need is firefighting untrustworthy data from unclear sources with poor provenance, uncontrolled changes, and processing steps that may or may not have happened.

No really, data consumers don’t need that!

Everyone Preboards Their Data… Somehow

We say that data files have been preboarded when all the steps have been addressed: identification, versioned storage, validation, upgrading, metadata tracking, and publishing. But really, preboarding is whatever you do before your data is accepted into the technology organization. If you simply throw a file from MFT (managed file transfer) right into the data lake, then that’s your preboarding process. Not a very good one, but there it is.

Historically, the problems with doing data preboarding well have been:

It takes effort to successfully have nothing happen — nothing in this case is good!

There have been few well-known architectures and few tools specifically for data preboarding

CSV files — the most ugly and problematic data — are hard and frustrating

Because of the lack of glamour architectures, the pile of old, unloved scripts, and the goal of invisible success, the best people gravitate elsewhere

And yet, the problems of not preboarding your data well are every bit as urgent as the problems of having your data center fail or your website taking a siesta every day. Big money and reputational risk are tied up in handling those unglamorous CSV files!

Making Data Preboarding Exciting

Business-existential work that is hard, yet has the potential for the kind of innovation that gets the spotlight, will always find its way into the hands of the best people. At least, it will if you focus on how good your data preboarding can and should be.

There are exciting architectures and tools for data preboarding that are an order of magnitude more interesting to create than the early-days file system-heaps of data we called a data lake. And the win from lowering the cost of manual data processing, ending firefighting, and protecting dollars-and-cents liability should be held up as the big deal that it is.

All it takes is good tools and the realization that data preboarding makes it possible to win. And, of course, it also takes the actual desire to win the data game. It definitely takes that.

Go Forth and Preboard Your Data!

If you’re ready to get control of your data partnerships and their out-of-control CSVs, Excel files, and other tabular data, look to FlightPath.

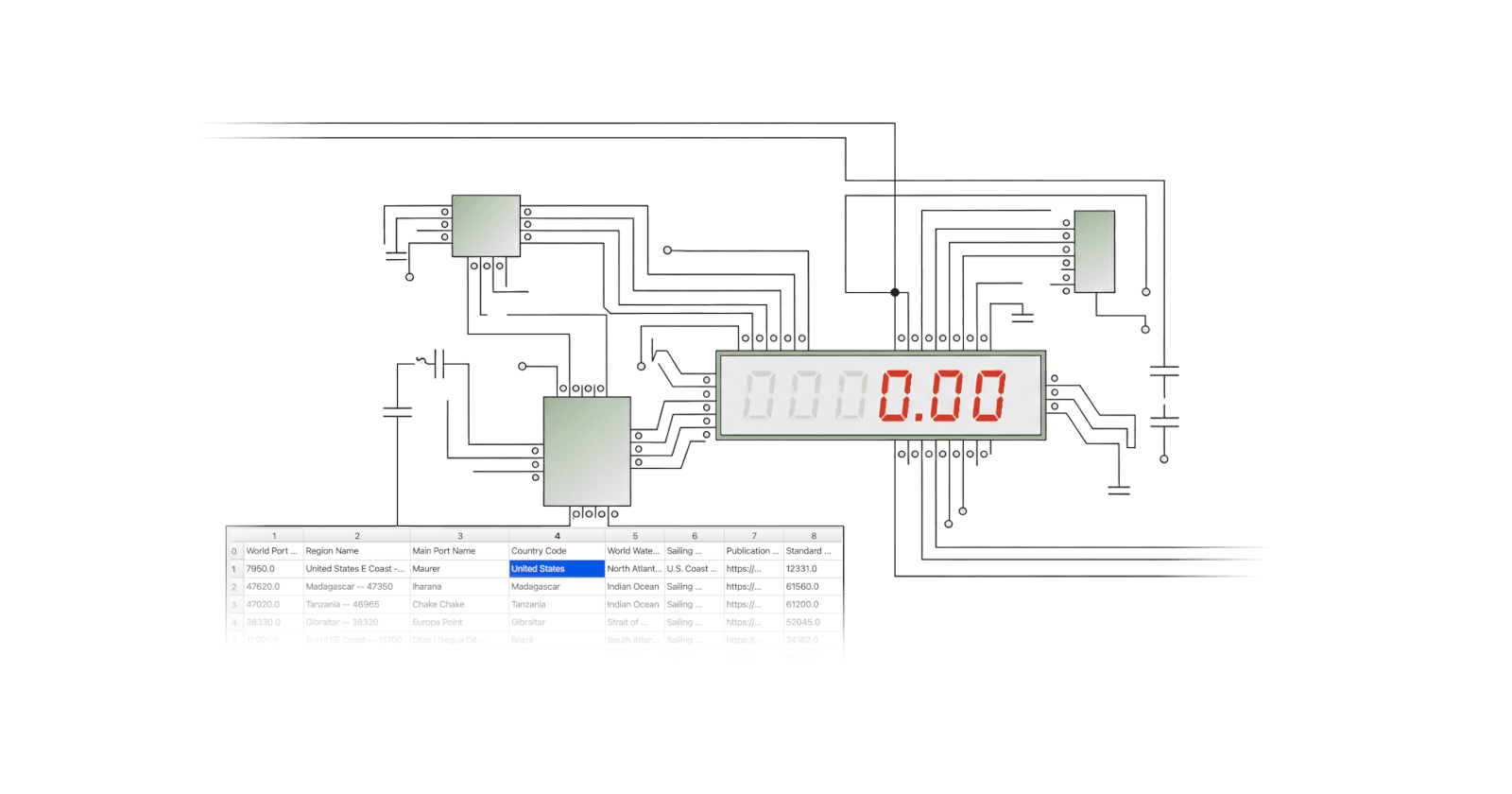

FlightPath is a drop-in solution based on CsvPath Framework. It is the preeminent data preboarding architecture. And it packs the validation, lineage, and data staging tooling you need to run a successful DataOps ingestion process.