Well-formed, Valid, Canonical, and Correct

Words have meaning, right? Are you misusing these?

The world of data is massively multi-dimensional. One of the most important dimensions is data validation. Without validation, you got nothing. Sometimes less than nothing. But for as much as how central validation is, how we talk about it is often loose and limited.

This post defines a few terms relating to validation. Not infrequently we just skate past these concepts. We're talking — or not — about levels of data acceptance. How good do we feel about an item of data or a data set as a whole?

While acceptance is ultimately a boolean, the world of data isn't just black and white. Garbage-in, garbage-out is definitely a thing. But one person's trash may be another person's treasure. And your grass-fed raw data may need to be cooked before I can eat it. The terms in the title build on one another. Each one is an f-stop in the vision for what good looks like.

How acceptable is this?

The terms in question are well-formed, valid, canonical, and correct. I list them in order from a data consumer's perspective — least specified to most. Why is their relationship important? Generally because data goes through stages, from acquisition to preboarding to ETL to enrichment and mastering to production end uses. If we can't speak about levels of quality in acceptance terms at each step, how could we know when to progress data to the next stage?

This progression is a practical matter for tool builders as well. How does MFT (managed file transfer) know when to progress data from arrival to preboarding? How does preboarding progress to onboarding and workflow tools? What are the stages of the medallion data lake, specifically?

Above all, do we know what good looks like? Are we moving too fast? How do we know when we're done? Are we there yet?

Well-formededness

Data that is well-formed first and foremost matches a physical specification, and, secondly, has the correct "outline" to be an item of data of the form expected. The specifications are standards like:

Well-formedness also relies on lower level definitions such as unicode and byte-ordering. Without detailed agreements on what constitutes minimally viable raw data the world quickly breaks down through an inability to communicate.

Valid

The next level up from well-formed is validity. Validity is a more robust stage, in that if data is valid, it is probably useful for something.

Files that are valid have data that is compared against a definition of what good data looks like. Data can be validated using rules or models. Well-known examples include:

Some of us love these specs, despite their dryness. Each has its own strengths and coolnesses. An XSD is primarily a model. A Schematron file is principally rules. In fact, a model is a short-hand and generalized way of writing rules. And, in this context, a set of rules is just a classification. But in practice it's simple: an item of data that doesn't match its schema is considered invalid.

Canonical

A canonical form is the form that is preferred over other possible forms of the same data. A simple example is the term IBM. Its canonical form may be IBM. It may also be seen as I.B.M. or International Business Machines. If we are canonicalizing data using this mapping to IBM and we see I.B.M. we substitute the canonical form. Note that if there are multiple accepted forms the canonical form is any of them, given the right time, place and/or bounded context. Canonicalization is closely related to data mastering.

Correct

Correct data is more than well-formed + valid + canonicalized. Correct means that the semantic and business rule content of the data meets expectations. For example, imagine a CSV file that includes a list of companies. Each company has an area of commercial activity. We see that:

The file is readable as a CSV file, so it is well-formed

The file has values under all headers in all rows, so for our purposes we'll call it valid

The company name I.B.M has been canonicalized to IBM so we'll say that the data is in a canonical form

And the company listed as IBM is described as being in the business of Sunflower Farming

Due to the last bullet having sketchy intelligence — we don't think IBM grows sunflowers, but maybe? — we'll say that this data is incorrect. Ultimately this is the most important consideration. However, if the lower acceptance layers are good-to-go, then the value of effort expended to make the data actually correct may be worth it. Or maybe IBM should start growing sunflowers. Actually, both things can be true.

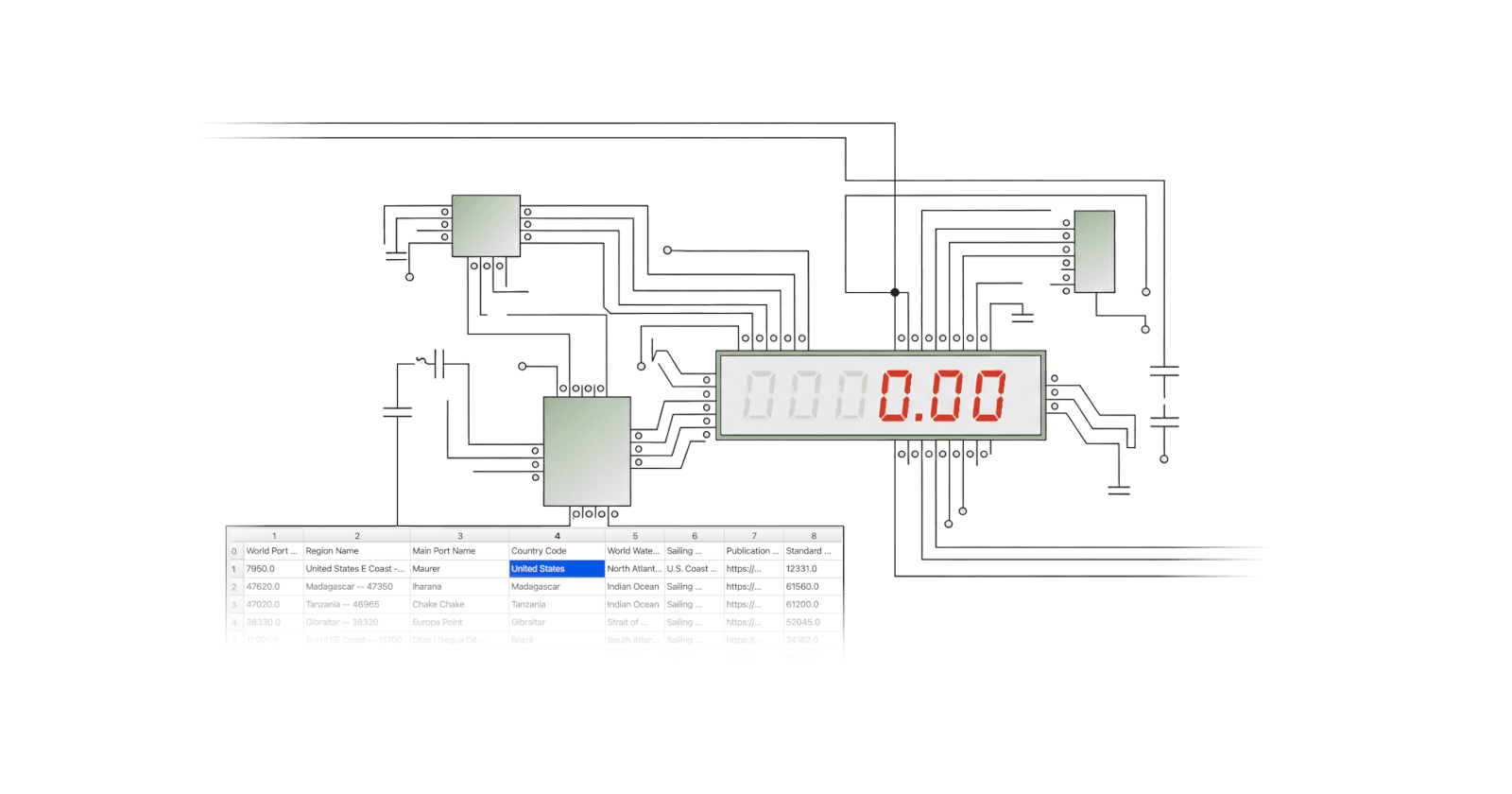

Where CsvPath validation can help

Our focus is on the ingestion strategy called data preboarding. Preboarding takes files that arrive kinda looking like data and whips them into shape so you know you in fact have good data.

Historically, CSV files have not had a commonly used validation language. CsvPath Validation Language is a new language to help change that. It gives you the validity, canonicalization, and correctness check you need to trust an unknown data file. CsvPath Validation Language offers both rules and schemas.

In other posts we'll talk more about CsvPath Validation Language. As a powerful function-based language for both schemas and business rules embedded in a complete preboarding architecture, there a lot to get excited about! Stay tuned or, if you can’t wait, bop over to https://www.csvpath.org and CsvPath Framework’s GitHub repo.